How to clear up a project after 3 different teams

In the article, we describe the issues we faced when working on the project we got from 3 different teams that had previously worked on it, and the way we resolved them.

Project concepting and strateging

During an appointment in clinics doctors dictate the information about a patient using special devices. Then this information is converted into a text (special employees listen to the audio record and type the text), the text is checked, and the system fills the letter template. And, finally, the letter is printed out and sent to the patient. The letter is archived after a while, but can be restored if necessary.

Project logic: audio - transcription - confirmation - mailing out.

The part of the process connected with the letters automation and mailing employees do on iPads. Part of the system for editing and approving documents can be managed in the browser. But most of the work is done on the user's computer in the hospital.

There is a mandatory condition: any data can’t be lost.That’s why everything is stored in the MySQL database on the user's local machine. The data is synchronized with the primary server.

Project peculiarities

- The system and its customer are in Europe;

- The system works in more than 10 clinics, each clinic has its own specifics of operation;

- It takes a long time to persuade the clinic to upgrade to a new version: first deploy to the clinic’s test server, then goes 3 week testing and only after that goes deployment to a production server;

- The databases contain confidential information about patients, so each clinic has its own server.

What we inherited:

- Approximately 400,000 lines of source code in C # (+ several more external libraries). Some source code was lost;

- At the beginning 5000 pieces of work a week were processed (passing through all the necessary stages, performing interactions with all external services, etc.);

- Absence of documentation;

- Absence of tests and test data;

- Absence of a person from the customer’s side, who would know everything about the system (the person who would have been since the project was created);

- The code was written so that it was impossible to cover it with unit tests;

- The MS SQL database consisted of approximately 120 tables;

- Performance issues;

- Usage of ADO.NET, Dapper, LINQ, Entity Framework 4.

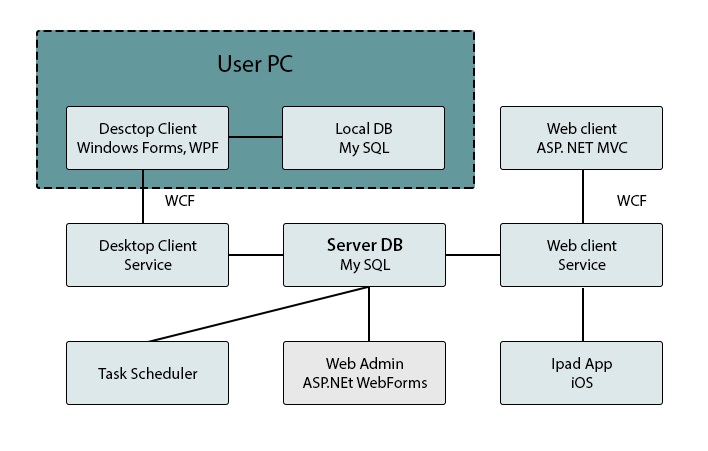

The part of the app’s architecture

App’s architecture

- Desktop client is a working application for a doctor, most of the system functions are performed in it;

- Desktop Client Service - a service that is used by all Desktop Clients;

- Task Tracker - system module, which is responsible for the transference of works to the next stage and other periodic operations;

- Pad App iOS - application for doctors on iPad;

- Web Admin is a web part for configuring workflows and accessing rights to devices, etc.;

- Web Client is a web part for doctors that has a limited list of possible functions.

Major achievements in 1.5 years

- The performance of the application has increased 10 times;

- The number of simultaneous users increased 5 times;

- The number of works processed per week reached 30 000;

- The customer began to trust our decisions.

How we achieved this.

1st issue: Data privacy

Clinics work with real patients, and data about them are confidential. They are on the servers of the clinics and include audio files with doctors’ dictations, official documents, etc. We needed a copy of each database for qualitative testing. But because of data privacy, hospitals weren’t eager to give this data.

We wrote an anonymization program that replaces all letters in the documents with units, and the contents of audio with an array of zeros. So that “John Smith” is converted in “Name 1”. Its development took 3 days. After that we copied the production database, which was anonymized.

Our miss

We didn’t take into account that anonymization will take a long time (the actual time of anonymization is about 5 days). Anonymization created additional load on the same physical drive on which the production database was. Fortunately, we were monitoring the situation and after receiving the first signs of a large queue for disk reading we stopped it. After that we added the function to the program so that it could continue anonymizing the databases after the interruption and run only at night.

Conclusions

The benefits of the anonymization program were not particularly huge, because the hospitals had different business processes and the data structure was different. Using an anonymous database gave short-term advantages and partially calmed the customer. He understood that it was a copy of the real data, and all new modules will work with predictable speed and on real data.

Issue 2: The code that is not for testing

Legacy code that needs to be extended without breaking - what could be easier: use unit tests and carry on. But the quality of the legacy code was low, and the code itself was written using singletones and the old Entity Framework, which is not suitable for testing. But tests were necessary. And we chose the way of creating integration tests. We tried to write new features so we could later cover them with unit tests.

Database recovery tool and function

Before that the programmer had to launch the program, run the data saving tool, call up the database indicating the path to the recovered data at the beginning of the test. The data storage tool ran through all the tables that exist in the database, and stored data from them in XML. After that, the recovery function created a new database, restored the data in it and changed the connection lines so that the tests access the new database. To restore the data, we created an insert command and used ADO.NET to execute it. This gave us independence from the Entity Framework. A positive consequence is that now this tool can easily be transferred to any other language.

If you prepare the database correctly, the recovery takes 1 second. Given the fact that the CI had night tests, this was an acceptable value.

The tool caught on with the testers, when they began to do testing with Ranorex, they added command-line arguments and started using it to restore the database in there too.

Within 3 years the testing specialists team has grown to 5 people

Conclusions

- You will inevitably have to reinvent the wheels for your legacy code;

- If you are thinking about integration tests, think about night tests;

- Restoring the database can be very convenient in terms of data simulation;

- Do not store data to restore the database in the test project. It's better to drop them in the zip archive on Dropbox, and store a link to them in an archive;

- A positive side effect of the restoration was the correctness verification of the change in the structure of databases, which we have to do from time to time.

Issue 3: Performance counters on the target machine

The question that we had during the first year was how to show the state of the inherited system and the fact that it gradually stops withstanding loads. An effective thing is to collect performance counters once a week. There are a lot of counters, they give graphs, they can be exported to Excel, they can be analyzed, etc. It is a pity that we did not do this from the very beginning, when there were 5 000 jobs per week.

Advantage of the approach

The client is easy to persuade - this is a built-in feature of Windows, so we did not even have to persuade the client to start collecting statistics.

Conclusions

It was necessary to remove the performance counters at the very beginning of the project. Although now it is quite interesting to compare them once a month.

Issue 4: Do not trust RFH to HTML converters

In the legacy code, the documents were stored in RTF format. The client asked us to improve the possibility of editing documents in the web client. There was a license for TxTextControl v 19, so the editor was made using this component. Later it became clear that the converting from RTF to HTML and vice versa leads to issues: the font gets off from 10th to 10.5, intended paragraphs get weird, etc. The help desk recommended to upgrade to the 20th version. After a detailed research it turned out that 20th version was quite faulty.

Conclusions

- Do not expect the help desk to solve all your problems with the editor;

- Converting from RTF to HTML and vice versa brings a lot of problems.

Issue 5: Recover lost part for iOS

In addition to the web part, there are applications for the iPad. The source code for it was lost. After some searches, we tried to restore the source code by decompiling. This can be done using the Developer Bundle from RedGate, which includes Ants Profiler. In general, the quality of decompilation is good: after analysing, within 2 hours we found how the authentication for IOS in the WCF server was executed and restored it in the latest version of the program.

Conclusions

Developer Bundle from RedGate really helped us out.

Issue 6: The profiler

We faced a performance issue: after 40 minutes of work, printing delays began. We checked everything that is possible from the server's point of view, but nothing bad was found. At the time we decided to try Ants Profiler.

Advantages of Ants Profiler:

- Easy to use, but requires administrator rights to install on the local machine. You can start the program under it in 3 clicks;

- Trial full-featured period of 14 days. If you need to run it on the destination machine once, it is the most suitable choice;

- Visual reports;

- Can log SQL requests, disk accesses, network requests.

After the client was launched under the profiler for 40 minutes, finding the issue became a matter of 3 minutes. There was an accumulation of abbreviations list, and with each character set, there was an abbreviation check, which caused delays.

The disadvantage is that at the highest level of profiling detail, it does not work well with COM objects. But after some experiments we found that the detailing at the level of procedures does not change anything, and we have been using this great tool for more than a year now.

Conclusions

Find the required level of detail in Ants Profiler.

Issue 7: Database performance at the snapshot level

We work in healthcare, and here the process of renewal is long. Approximately six months ago, when the number of jobs increased to 20,000 per week, the client got issues: the system did not have time to process the task queue, and they did not go through the workflow. The problem was caused by the fact that during the reading from the table, it got blocked, so the tables update commands were not performed as quickly as hoped.

It was necessary to optimize the application without changing it on the server. It is logical to assume that if you optimize without changing the code, then you need to optimize the database. In this case, the indexes in the database have already been created.

After a week's analysis, we tried to redeploy the database into snapshot mode. This gave excellent results: the queue with 400 was reduced to 12 jobs, and this is within the norm.

Conclusions In addition to creating indexes, remember about the snapshot mechanism that MS SQL has.

Issue 8: Reports

There was an administration panel for building different types of reports. These reports were not written efficiently, and their calculation took up to 40 minutes. This included calculating the productivity of the typesetters. Reports often led to table locks (and this was before the database performance solution at the snapshot level). Logically, these reports showed information for the past day. Employees at the beginning of the working day ran it through the administration panel, after which the file appeared on the network drive, where everyone could look at it. Because of the table locale, all update and delete operations from the database stopped from time to time.

Command-line tool

For this solution, we created a command-line tool and executed it using the Windows task scheduler, and removed from the admin panel. Thus, we have kept the logic, so by the beginning of the working day the report is ready.

Conclusions

Analyze how often reports are created and by what date they should be ready. Perhaps our solutions were not ideal. But they allowed to retain and scale the product that thousands of doctors use. We develop it further so that our client and his customers are satisfied.

Perhaps, our decisions were not perfect. But they allowed us to develop and scale the product that is used by thousands of doctors. We carry on developing it so that our client and his customers were satisfied.